Data Driven Price Optimization

Leigha Jarett

Developer Advocate, Looker at Google Cloud

Bertrand Cariou

Sr. Director Solutions & Partner Marketing, Trifacta

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

Free trialWhen companies can optimize their pricing to take into account market conditions dynamically, they can use it as an important lever for differentiation.

In order to optimize pricing, organizations need to be able to respond to market changes faster, which means optimizing for a continuum of decisions (such as list price or promotions) and context (such as localization or special occasion) to serve the function of multiple business objectives (such as net revenue growth or cross-selling).

“Thanks to a comprehensive approach to monitoring our eCommerce jewelry business, we’ve been reacting dynamically to our pricing and marketing strategy, which resulted in doubling our sales. This wouldn’t have been possible without the Google Cloud smart analytics suite and in particular Dataprep by Trifacta, which gives us the ability to combine and standardize our data in a single view in record time." Joel Chaparro, Head of Data Analytics, PDPAOLA Jewelry - www.pdpaola.com.Common Data Model: The First Step Toward Trustworthy Pricing Models

Data is the biggest challenge for modern pricing implementation. Building a robust pricing model requires large quantities of data that must be successfully ingested, maintained, and managed among the right stakeholders. All too often, however, organizations face repeated “trust” issues due to a lack of data quality, poor data governance across multiple geographies or business units, and extremely long and inefficient deployments.

One of the first steps towards resolving these issues is developing a Common Data Model (CDM). Having a centralized model for all required data is essential to ensure that business stakeholders have a single source of truth. A CDM defines standards for the key components influential to pricing optimization, including transactions, products, prices, and customers. From there, the standards are applied through a blended data pipeline and serve the downstream systems to leverage pricing data (business applications, dashboards, microservices, etc.) with one homogeneous data model. The CDM also offers a consistent way for team members to collaborate better using a common language.

BigQuery offers the perfect place to host the CDM table with a scalable infrastructure to run the necessary analysis to discover patterns and trends that guide pricing strategy.

Cloud Data Engineering for Pricing Analytics

Having a Common Data Model and a modern data processing environment such as BigQuery in place are essential foundational components. But the true test of pricing optimization efficiency is measured in how rapidly users can act on this data. In other words, how easily can users prepare data, the first and most critical step in data analysis? Historically, the answer has been “not very.” Preparing data, which can include everything from addressing null values to standardizing mismatched data to splitting or joining columns, is a time-consuming process requiring great attention to detail and, often, many technical skills. It can take up to 80% of the overall analytics process. But by and large, it’s worth the extra time spent—properly prepared data can make the difference between a faulty and accurate final analysis.

Google Cloud’s answer to data preparation and building scalable data pipelines is offered through the data engineering cloud platform Google Cloud Dataprep by Trifacta. This fully managed service leverages Trifacta technology and allows users to access, standardize, and unify required data sources for pricing analysis.

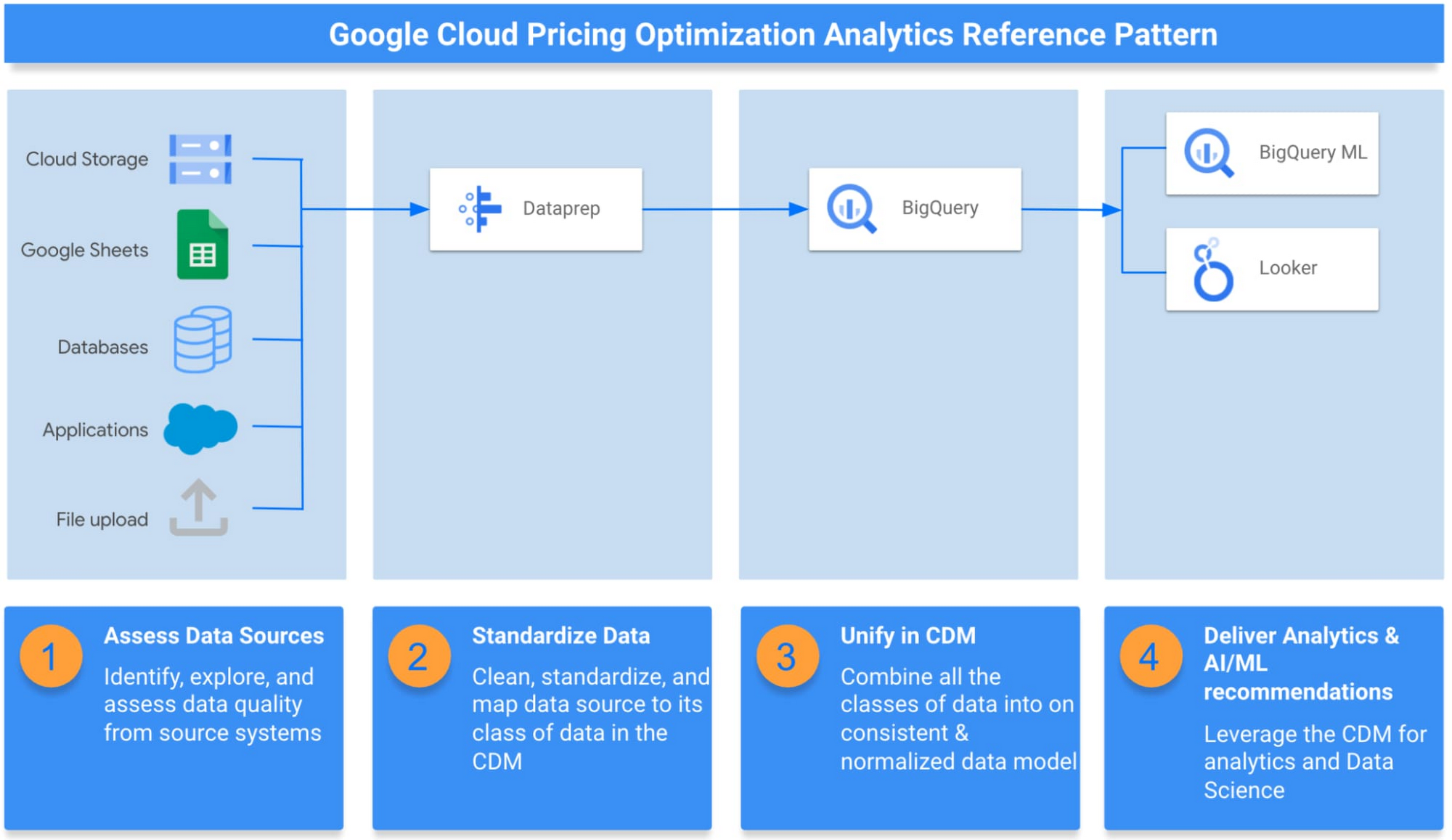

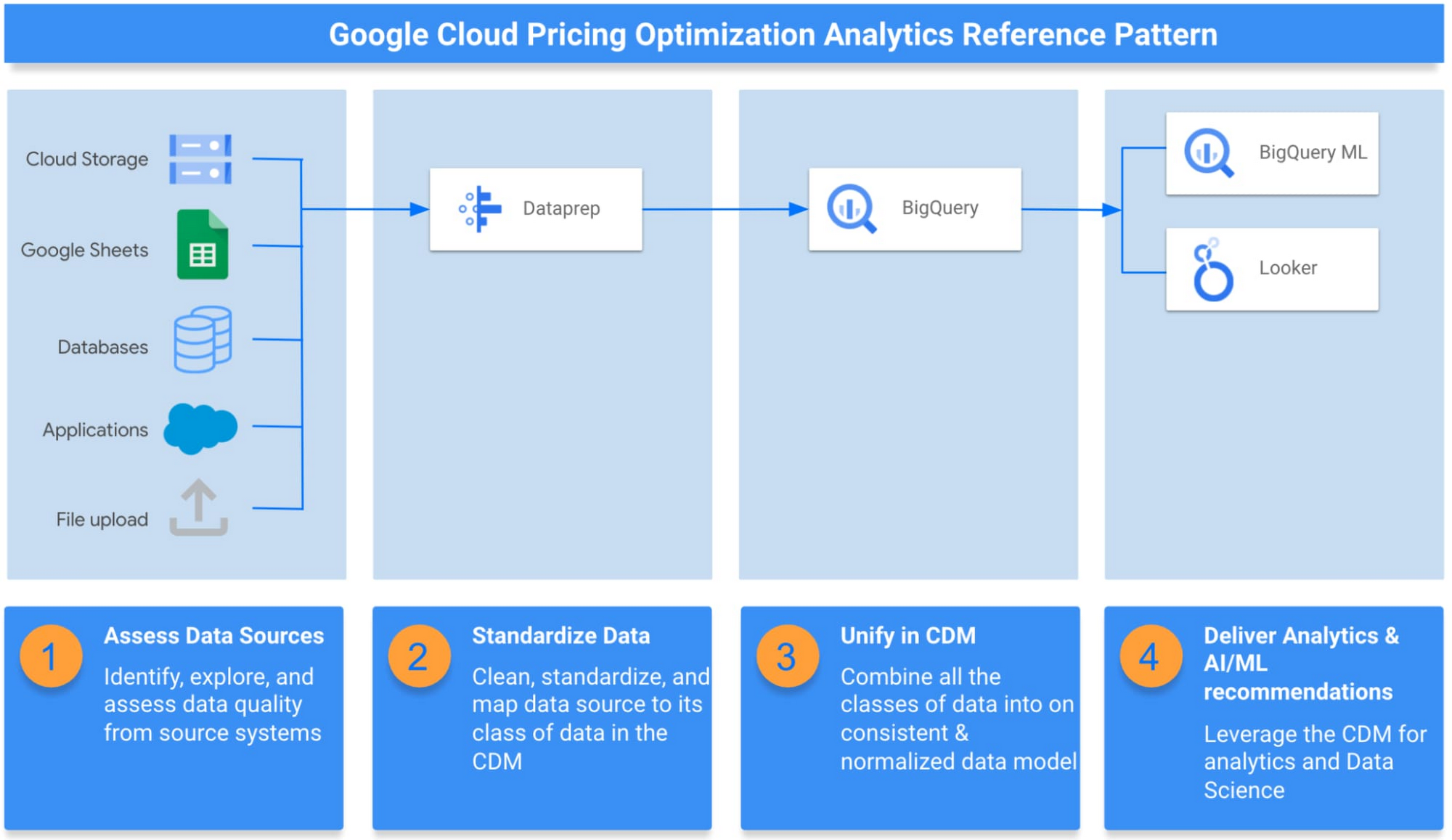

Google Cloud Pricing Optimization Analytics Reference Pattern

Reference Pattern for Pricing Optimization

To enable your organization to succeed in a data-led strategy for pricing optimization, we have put together a pricing optimization reference pattern that provides the guidance you need to kick start your solution. The reference pattern includes the necessary components (Dataprep, BigQuery, BigQueryML, Looker), the guidelines, and the sample assets you need to test the solution with sample data, build dashboards, and create predictive models to guide your pricing strategy. In the reference pattern, we walk you through the following:

- Assess Data Sources

Pricing optimization often requires analyzing several different data sources, including historical transactions, list price for products, and metadata about products and customers. Each source system will have its way of describing and storing data, and each may also have different levels of accuracy or granularity. In this first step, we connect and explore disparate data sources in DataPrep to understand discrepancies in the data and make decisions on how to combine sources. - Standardize Data

After identifying the source systems and assessing their data quality, the next step is actually resolving those issues to ensure data accuracy, integrity, consistency, and completeness. - Unify in One Structure

Once the data has been cleaned and standardized, the different sources are joined in a single table consisting of attributes from each data class at the finest level of granularity. This step creates a single, de-normalized source of trusted data for all pricing optimization work. - Deliver Analytics & ML/AI

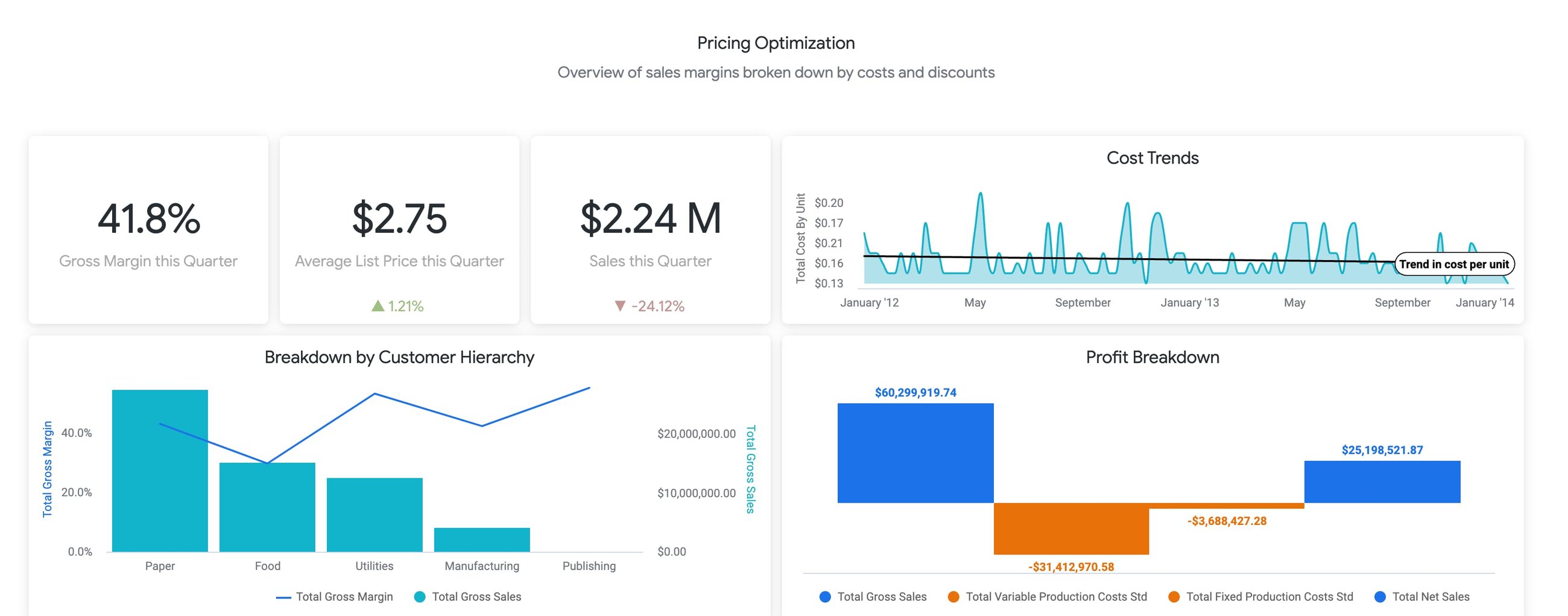

Once data is clean and formatted for analysis, analysts can explore historical data to understand the impact of prior pricing changes. Additionally, BigQuery ML can be used to create predictive models that estimate future sales. The output of these models can be incorporated into dashboards within Looker to create “what-if scenarios” where business users can analyze what sales may look like with certain price changes.

With this approach, your organization will start to see dramatic changes to your bottom line—but without all the hard work of preparing and transforming data. Check out the sample dashboard here!