Open source collaborations and key partnerships to help accelerate AI innovation

Sachin Gupta

Vice President & GM, Infrastructure, Google Cloud

Closed and exclusive ecosystems are a barrier to innovation in artificial intelligence (AI) and machine learning (ML), imposing incompatibilities across technologies and obscuring how to quickly and easily refine ML models.

At Google, we believe open-source software (OSS) is essential to overcoming the challenges associated with inflexible strategies. And as the leading Cloud Native Computing Foundation (CNCF) contributor, we have over two decades of experience working with the community to turn OSS projects into accessible, transparent catalysts for technological advance. We’re committed to open ecosystems of all kinds, and this commitment extends to AI/ML—we firmly believe no single company should own AI/ML innovation.

Our collaboration with industry leaders on the OpenXLA Project

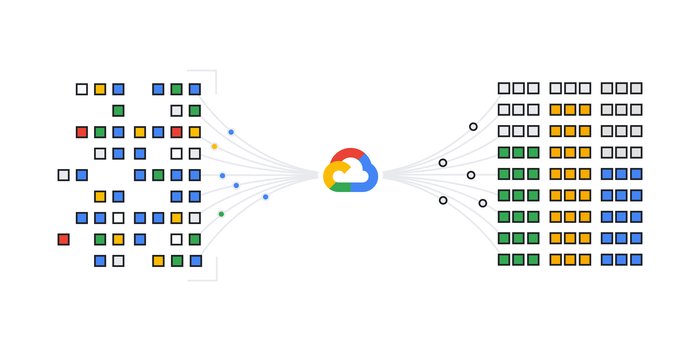

We are taking this commitment a step further as a founding member and contributor to the new OpenXLA Project, which will host an open and vendor-neutral community of industry leaders whose work aims to make ML frameworks easy to use with various hardware backends for faster, more flexible, and impactful development.

ML development is often stymied by incompatibilities between frameworks and hardware, forcing developers to compromise on technologies when building ML solutions. OpenXLA is a community-led and open-source ecosystem of ML compiler and infrastructure projects being co-developed by AI/ML leaders including AMD, Arm, Google, Intel, Meta, NVIDIA, and more. It will address this challenge by letting ML developers build their models on leading frameworks (TensorFlow, PyTorch, JAX) and execute them with high performance across hardware backends (GPU, CPU, and ML accelerators)). This flexibility will let developers make the right choice for their project, rather than being locked into decisions by closed systems. Our community will start by collaboratively evolving the XLA compiler, which is being decoupled from TensorFlow, and StableHLO, a portable ML compute operation set that makes frameworks easier to deploy across different hardware options. We will continue to announce our advancements over time, so stay tuned.

Building on our open source AI partnerships

Our partners see our open infrastructure as a great place to model, test, and build new breakthroughs in ML. We are committed to working with our partner and developer communities on open source projects, and we're keeping them open while we do it.

For example, leading ML platform Hugging Face is partnering with Google to make popular open source models accessible to JAX users and compatible with TPUs. “The open source collaboration will make it easier to use models like Stable Diffusion and BLOOM (a GPT-3-like large language model trained on 46 different languages) on Cloud TPUs with JAX optimized implementations,” said Thomas Wolf, the company’s co-founder and chief science officer."

We’re also excited to collaborate with Anyscale to make AI and Python workloads easy to scale in the cloud for any organization. Anyscale is the company behind Ray, an open-source unified framework for scalable AI and Python that provides scalable compute for data ingestion and preprocessing, ML training, deep learning, and more, and it integrates with other tools in the ML ecosystem.

“We look forward to collaborating with Google to accelerate the adoption of Ray and Anyscale’s enterprise-ready managed Ray Platform on Google Cloud,” said Ion Stoica, Anyscale’s co-founder and executive chairperson. “Both Anyscale’s Platform and Ray are available today on Google Cloud and Google’s infrastructure, including TPUs and GPUs.”

Ray also plays a big role for our partner Cohere, a leader in natural language processing, as do JAX and TPU v4 chips. Thanks to this combination, they can train models at a previously unimaginable speed and scale. “Having research and production models in a unified framework enables us to evaluate and scale our experiments much more efficiently,” said Bill MacCartney, vice president of engineering and machine learning at Cohere.

In the field of vision AI, Plainsight uses Google Cloud’s Vertex AI with leading open-source projects including Tensorflow, PyTorch, and OpenAI’s CLIP. “By combining open-source technology and development tools like Python with Google’s industry-leading capabilities, Plainsight has been able to increase innovation velocity by helping enterprises succeed with solutions that build on the industry’s best capabilities,” said Logan Spears, chief technology officer at Plainsight.

Our customers are already actively using the AI community’s open-source contributions to innovate faster, and we look forward to continuing to help our customers through strong partnerships with industry leaders.

Investing in an open ML future

We’re eager to see how our open-source initiatives add to the amazing things our customers and partners are already accomplishing in the rapidly-advancing ML domain. To learn more about why Google Cloud has become the destination of choice for many organizations’ open-source AI needs, read this blog post about Google’s history of OSS AI and ML contributions, and check out our Google Cloud Next ‘22 session “How leading organizations are making open source their super power.”